By Peter Rabley and Christopher Keefe

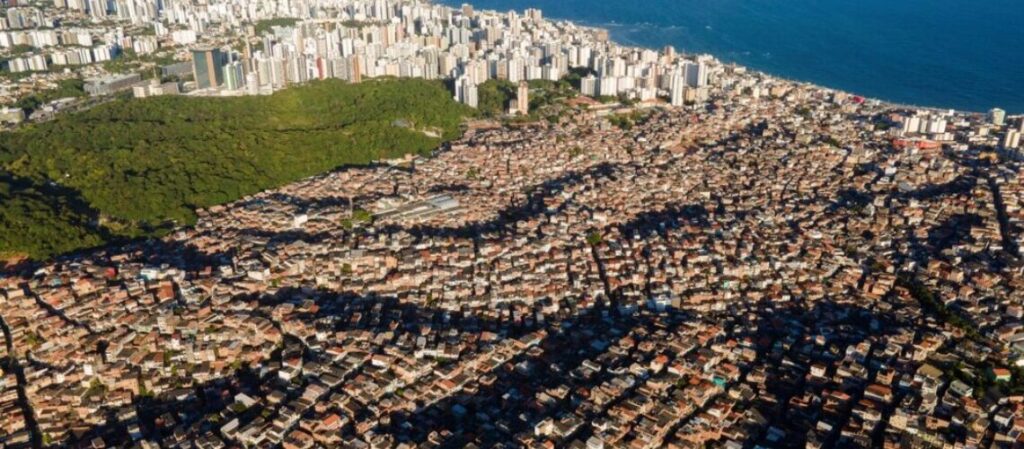

With the exponential growth of satellite based mapping platforms, mapping data is increasingly abundant – yet much of this data is not readily accessible or affordable in many parts of the world. Similar to wealth inequality worldwide, the power and benefits of mapping data are held in the hands of too few.

We formed PLACE out of a belief that mapping data are an essential public good. Akin to digital infrastructure it must be available for the benefit of people and societies everywhere. This data is increasingly foundational to the products and services that are fueling today’s data economy in sectors like e-commerce, digital health, fintech, climate analytics and research, insurance, city planning, government services, environmental resilience and much more.

PLACE is our answer to today’s inequality of mapping data.

Once a public good provided by government, mapping data has now become fragmented and marginalized in most of the world. Governments worldwide increasingly find it difficult to maintain the funding and technical resources needed to provide up to date commercial grade mapping data. Big technology companies like Google and Facebook provide excellent products and services but are beholden to shareholder interests and their business models are largely predicated on surveillance capitalism. NGOs and open mapping platforms fill a vital role in the ecosystem but do not provide the consistent quality, timeliness and comprehensive data and imagery required by public and private sectors to deliver critical products and services. Apart from big technology firms, funding is a problem that has proven challenging to overcome.

We created PLACE recognizing the need for a more inclusive, sustainable and shared model to provide the primary mapping data that is fundamental for inclusion in the digital world – a model that is not beholden to shareholder interest, but the public interest. A data trust provides for independent governance and stewardship of data separate from its creation and maintenance. Our trust will be overseen by an independent board of trustees selected for their commitment to the mission, as well as their experience and know-how related to the trust and its purpose. We believe that the most equitable and sustainable way to provide this essential infrastructure at the scale needed to make a difference is through the creation of a non-profit data trust with open membership.

Our sustainability model is based on the notion of a club good serving a membership community. Each of our members agrees to a set of membership terms and conditions around the ethical use of data, and all members must be in good standing to have use of the data. In return, members have a say in how the trust is maintained and secured. To be fully sustainable and maintain the fresh data required in today’s quickly changing world, we ask for an annual fee for membership, with tiered levels based on type and size of organization and usage of data. We also have a separate fee structure for commercial companies to allow them to be free of royalties and share and share alike requirements and realize full commercial innovation from the use of PLACE data. We create mapping data that does not compete with any of the members of PLACE. PLACE is a registered US 501 (c)(3) non-profit public charity.

Core Operating Principles

Our work is based on a set of core operating principles that keep us focused on our mission and hold us accountable to serving the public interest:

-

Democratizing Data: Mapping data is critical to advance the public interest and should be accessible, affordable and available to people everywhere.

-

Government Engagement: Governments are critical partners, and we rely on their active engagement and participation in the geographies we serve. Governments give us license to operate, provide flight clearances and further critical support such as surveying local ground control points that help us ensure high quality localized aerial and street imagery. Working with government ensures our data meets local mapping needs and matches existing data sets that might be in local datums. Our data is also produced in latitude / longitude so it can be used globally.

-

Local Partners: Local partners understand the context and have the knowledge, networks and sensibilities to operate optimally in their own communities. We partner with and provide ongoing contracts to local entrepreneurs and enterprises to produce and update mapping data on behalf of the trust, creating high tech jobs in local communities. We certify their technical abilities and offer training when needed.

-

Fair Pay: We believe people should be paid for their labors and reject the notion of free crowdsourcing when it is then used by corporations to drive their profits. We believe that while there may be a place for “gig” jobs for certain tasks, these are not optimized to produce data consistently at scale. We want to produce jobs that are of the greatest benefit to local communities.

-

Standards, Simplicity and Impartiality: We set standards for the data we collect and store to ensure compatibility across regions and systems. We keep it simple by deploying two primary imaging systems containing sensors that collect data that are observable and detectable, one at street-level and the other from a typical height of 400 ft above ground that produce high resolution, high accuracy imagery and related metadata. Both imaging platforms also collect pollution data (three particulate measures). Our imagery meets or exceeds the specifications needed to support cadastral and other surveying requirements.

-

Stakeholder Engagement: We are helping to lead a global community that puts data ethics, transparency, privacy and security at the center of mapping work. We actively promote dialogue, convene and create solutions to make progress on the critical issues facing data governance and mapping. We are currently working with The GovLab, Future State and a wide variety of stakeholders to define the long-term governance structure whereby we anticipate the creation of an independent board of trustees to oversee the data trust, to hold management to account and ensure that it remains on mission.

-

Ethical Use: Our members will agree to a set of guidelines around ethical use of PLACE data. We have been providing ongoing support to the American Geographical Society, Benchmark, Geovation and others that are working to bring greater transparency, ethics and agreed guidelines for the use of mapping data. They recently launched the Locus Charter, a set of common guiding principles to help practitioners and decision-makers use location data for good and we are incorporating those principles into our work.

-

Privacy and Data Security: We will work to ensure we deploy best practices when it comes to privacy and data security, and will continually work with our board, the data ethics community, the board of trustees and others to maintain the highest standards of integrity as it relates to all our activities and practices.

-

Club Good: Our model is based on the notion of a club good. Each member agrees to a set of membership terms and conditions around the use of data, a non-rivalrous good, and all members in good standing have use of the data.

The history of the PLACE team has been that of working at the intersection of geography, technology, the internet, philanthropy, and finance. We built PLACE because we believe that communities should benefit from the knowledge and data about the places around them. They need and deserve to have the critical digital infrastructure that is powering places in the digital age.

PLACE sits at the center of a vibrant ecosystem of value creation for members and data producers. Our role is to raise financing, collect, maintain, process and aggregate data and create the information systems technology backbone for secure data storage, access and usage. We oversee membership and are responsible for the business operations of the trust including capacity building, learning and growth.

Our goal is to create basic mapping infrastructure to help improve lives, strengthen public services and better care for the environment and climate.

Thanks to Nigel Edmead, Chelsea Eversmann and Amy Regas for contributing to this blog.

2 Responses

I will also like to say that most of those that find themselves devoid of health insurance are typically students, self-employed and people who are jobless. More than half with the uninsured are really under the age of Thirty-five. They do not feel they are requiring health insurance simply because they’re young as well as healthy. Its income is typically spent on housing, food, along with entertainment. A lot of people that do go to work either complete or in their free time are not presented insurance through their jobs so they move without with the rising expense of health insurance in the us. Thanks for the tips you talk about through this website.

Hey I am so happy I found your website, I really found you by mistake, while I was looking on Yahoo for something else, Regardless I am here now and would just like to say many thanks for a incredible post and a all round interesting blog (I also love the theme/design), I don’t have time to look over it all at the moment but I have saved it and also added your RSS feeds, so when I have time I will be back to read more, Please do keep up the excellent work.